|

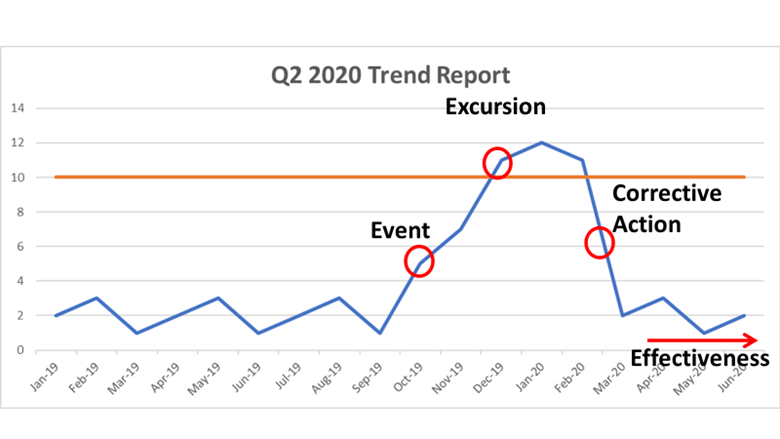

Particle counts were off the chart in an ISO 8 clean room! Literally, the particle counter couldn’t count high enough. A pump was spewing oil with each thrust. The spewing started slowly after a routine maintenance activity. Counts built up slowly over months then exploded into OOS results, forcing us to act. We fixed the pump and everything was fine again. Let's talk about the last step of that investigation. We needed to complete an effectiveness check to close the record in our quality system. We need to be “SMART” with our effectiveness checks! These principles were referenced in so many trainings I’ve been through. SOPs required this “SMART” plan. This is how the effectiveness check for the pump fix was SMART: Specific - We had a particle excursion in a specific room, so we assessed results from that room. Pretty specific. Measurable- We measured using the routine test procedure and compared measurements to our SOP/ISO 8 requirements. Achievable- All results had to pass. We knew it was achievable because the room met requirements when the pump was working before the break. Relevant- The rationale here was covered with “Specific”. If you had a particle failure, and you wanted to make sure that failure wasn’t ongoing, then you want to know results are good after the fix, right? Timely- Per procedure, we couldn’t keep effectiveness checks open for longer than 3 months. That guaranteed us 3 post-fix results with our routine monitoring plan. Perfect. The plan seems logical right? Unfortunately, every aspect was misguided. In an effort to make the effectiveness check achievable and timely (make it pass, fast!) the plan was designed like too many other poorly designed effectiveness checks. "Look at the same test results for X amount of time." The corrective action is effective if everything passes. First- The root cause wasn’t the broken pump, it was the yearly maintenance that broke the pump. Our post-fix tests showed we fixed it. We had no idea if the next maintenance would break it again. Second- We needed to know if the new maintenance procedure was effective. Since the maintenance was done on a yearly basis and our effectiveness checks were not allowed to be longer than 3 months, the “T” part of the “SMART” effectiveness check was impossible. To avoid keeping the record open for a year, we emphasized the broken pump as the root cause. That way, the effectiveness check just needed to confirm we fixed the pump. Isn’t that crazy? We feared an effectiveness check requirement, so we changed the root cause. That could have impacted what we did to correct it. If I hadn’t pushed the maintenance group to update their procedure outside of the investigation record, we wouldn’t have had a maintenance fix at all. This was one of many experiences that made me HATE effectiveness checks. But they need to be done. How else can we show our corrective actions are effective? Well, let’s see what they FDA has to say. Surely they have some recommendations. The FDA defines effectiveness checks in their regulatory procedural manual. “'Effectiveness Checks' are actions taken to verify that all …." Don’t waste your time with the whole definition. It only speaks to recall actions. It has nothing to do with corrective actions for GxP issues. CFR 21 part 820 also speaks a little to corrective action effectiveness. Manufacturers must have procedures for “Verifying or validating the corrective and preventive action to ensure that such action is effective and does not adversely affect the finished device" That’s the extent of it. No hints on HOW to do the effectiveness check. What about FDA warning letters? The FDA demands actions from countless firms that need to fix quality issues. I checked through a bunch of warning letters. It's always similar wording, something along the lines of: “You should address how you plan to oversee your CAPA program to ensure that you are confident that all corrective actions taken by your firm are verified to be effective.“ The FDA keeps making demands with no guidance on how to meet them! Well, they do make suggestions to bring in quality consultants. Those experts love the SMART plan. The particle example shows how, when that plan is taken out of a vacuum and mixed with mixed with metrics and business interests, you get some misguided and superficial effectiveness checks. So what are we supposed to do? Well, what if we look at it a different way. How does the FDA train themselves to see if a firms’ corrective actions are effective? Following that train of thought, I found a presentation on the FDA’s website for their inspectors. This is the most exhaustive section I can find on effectiveness checks: "Determine if corrective and preventive actions were effective and verified or validated prior to implementation. Confirm that corrective and preventive actions do not adversely affect the finished device…" Ok, on par with other FDA documents. Make sure the cure isn't worse than the disease. What else? “Using the selected sample of significant corrective and preventive actions, determine the effectiveness of these corrective or preventive actions.” Getting repetitive, determine if changes were effective, but go on… “This can be accomplished by reviewing product and quality problem trend results. Determine if there are any similar product or quality problems after the implementation of the corrective or preventive actions." Finally! Something substantial to determine if a corrective action is effective. It makes perfect sense. You can tell if your corrective action was effective by comparing trends of the problem before and after the action was complete. Firms should already have routine trend monitoring for potential quality problems. That’s where corrective action effectiveness should be tracked. Trends can be annotated at the event and expected change point with the event and corrective action. Firms feel obligated to keep quality records open until individual effectiveness checks are complete. That's a big time drain and shortsighted. Trend programs, which can cover years of product and environmental data (including other non-conformance trends) should include references to relevant excursions and events. Write your trending procedures to track data associated with those events. That way, you’ll never need to keep quality records open for effectiveness checks again.

This is a boring subject that I could talk about for hours. I have some more examples I want to add to this, but I’ll save that for another day. Also- It’s exhausting digging up all the FDA’s thoughts on this issue. Let me know if the FDA or other regulatory agencies have other documents that address effectiveness checks. I’d love more background!

2 Comments

Dana

11/7/2020 07:02:46 am

Hi Jon,

Reply

Jon

11/7/2020 05:28:42 pm

Thanks Dana! I'm struggling to find something specificaly labeled as the preamble. Do you have the text/link for that?

Reply

Leave a Reply. |