|

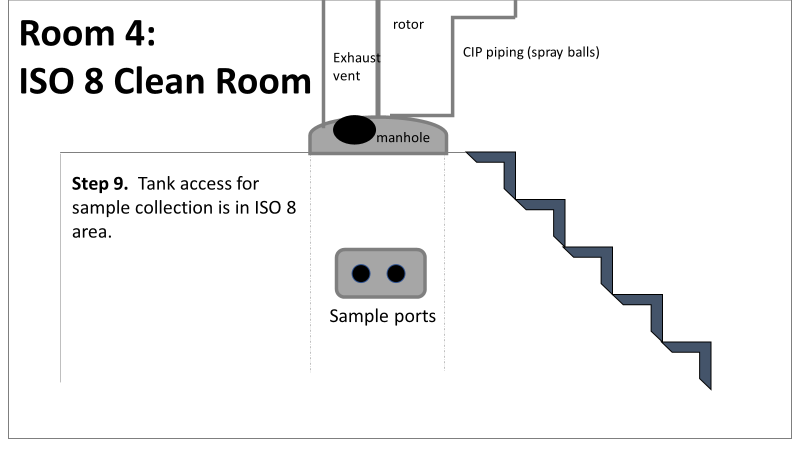

Welcome to part 2 of this investigation series! You can catch up on Part 1 right here. This investigation started with an immediate smoking gun! The pooling vessel had foaming issues and frozen broth chunks. The operators stuck a pool strainer into the tank to mix things up. You read that right. They used a non-controlled pool strainer that’s not mentioned in any procedure. I still have no idea where it came from. They brought it through a CNC/ISO 9 transition zone, moved it through an ISO 9/ ISO 8 transition zone, opened the manhole entrance at the top of the tank, stuck it in the broth, then swirled it around for half an hour. When I interviewed the operators, they emphazied the exhorbitant amount of alcohol used to sanitize the tool in each transition zone. They wiped down each and every crevice, including inside the extension poles. But this was as obvious of a smoking gun as possible. We didn’t want to delay the start of the next engineering run (the last one scheduled). I wrote up the investigation report, also addressing a few extra potential contributors like maintenance performed on the mechanical transfer arm during the thawing process and an issue with the sample tubing. The report included all WFI, Environmental Monitoring (EM), and cleaning data proving the site and equipment were otherwise in control. All reviewers, including the Microbiology department head and the site Quality Assurance director, were happy with the report. It closed on time and the next run could move forward as planned. I watched that next engineering run with interest. Some self doubt crept in, and I had some perspective on the contamination that didn’t quite jive with the initial report. I snuck into the lab a day before the bioburden test plates were scheduled to be read. The plates looked the exact same as the previous run. Covered in Bacillus; >250 CFU/mL. Now things got scary. The site planned to run their first commecial batch in a month, but the previous two runs had overwhelming bioburden counts. A superstar team was put together.

This investigation had everything. It was treated with the intensity of a final product sterility failure, even though ~10 downstream bioburden samples were collected after further purification steps. We followed a DMAIC (Define, Measure, Analyze, Improve, Control) road map, with all the investigative tools you could ask for, like:

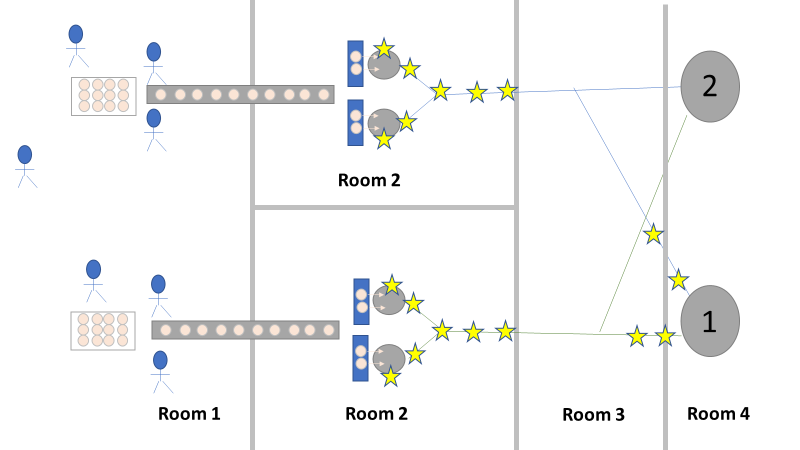

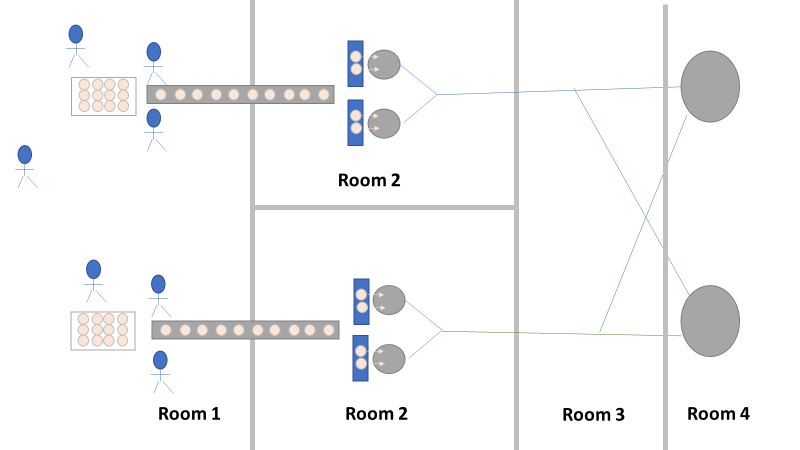

We even had another smoking gun that somehow didn’t come up during the first investigation! The first three engineering runs used a single pair of thaw vessels, the same pair each time. The 4th and 5th runs used both thaw vessel pairs (as seen in the overhead diagram). The organism had to be coming from this second thaw vessel chain, right? We thought so too, so we put together a major swabbing effort to see if we could find our bacillus organism. We swabbed both pairs of thaw vessels and the piping leading from them to to pooling vessel. We hoped the pair used for the first 3 runs would function as a control. The stars on the overhead diagram represent the approximate areas we swabbed. Keep in mind, all runs were performed using the pooling vessel marked “1”. The pooling vessel marked “2” (and the piping leading to it) was never used for these engineering runs. The Equipment CIP was performed soon after the 5th run was complete. Swabbing began a few days after the bioburden results were generated, so about a week passed between cleaning and swabbing. Guess what? We saw our Bacillus organism in almost every location we sampled. Both sets of thaw vessels. The counts were highest downstream near the pooling vessel, dwindling to a single colony (if any) on the thaw vessel rims. I gave a couple spoilers about non-root-causes in Part 1 of this series. My spoiler in Part 2: these results were accurate representations of what was swabbed. There were no sampling or testing errors that threw us off. As all raw material moved in a single direction through the equipment, the group came to the following conclusions:

Fortunately, both of those conclusions were dead wrong. Unfortunately, it took two rejected lots to figure that out. In the next part of this story, I’ll explain why the formulaic investigation techniques (i.e. the alphabet soup of techniques listed above) forced the investigation team to continue acting on those conclusions. I’ll also go into how the team should have been looking at this problem. This alternative mindset ended up saving a 3rd lot at the last minute. Check out Part 3, Part 4, and Part 5 here!

0 Comments

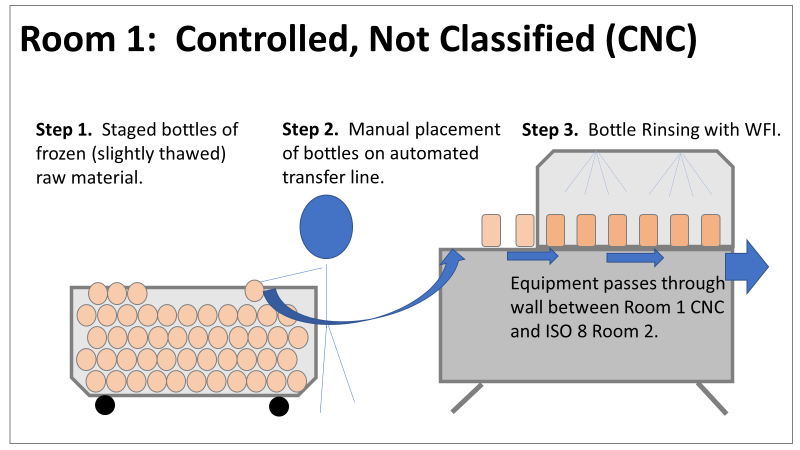

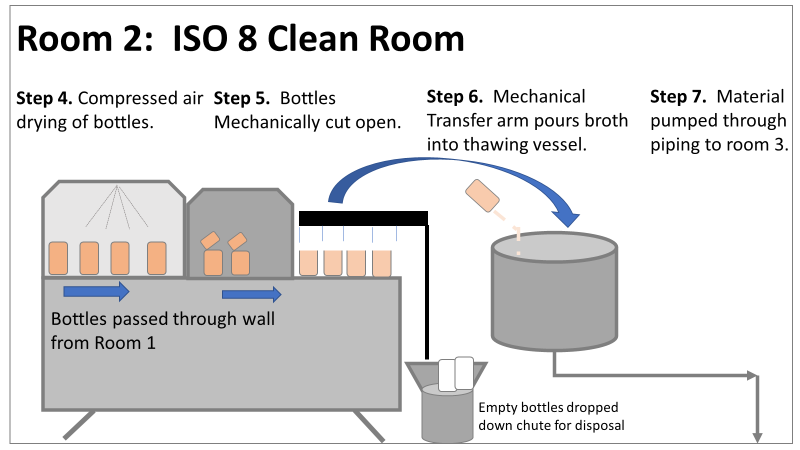

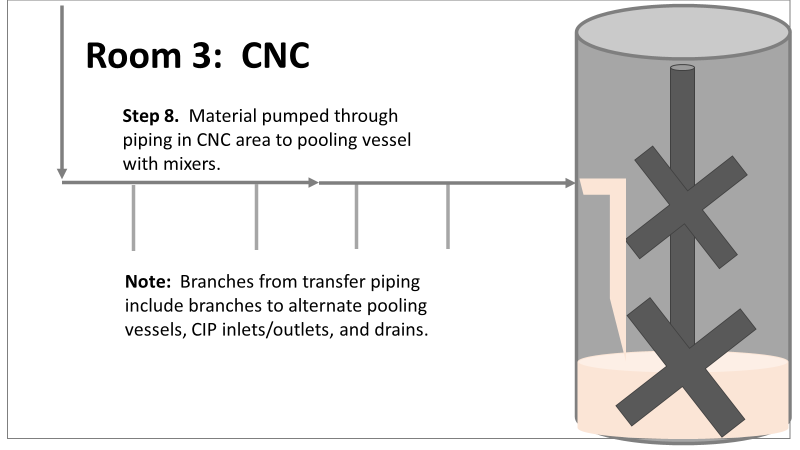

Welcome to my series about critical investigations I've worked on! This is part 1 of an investigation I call "The Million Dollar Rejected Lots". This investigation determined over two million dollars in raw material needed to be destroyed. First off- writing these stories with an interesting narrative is hard. I want to write these out as a mystery so you can experience what I did going through the investigation. But, with my benefit of hindsight, I know some things that threw off my team during these investigations. Aspects that threw off my team for days turned out to be pretty irrelevant, so it's hard to fit them into the story. I’m struggling to present those aspects in an intriguing way that doesn’t give away the ending. Let’s see how this goes! This investigation was for massive bioburden (Bacillus) contamination seen in upstream processing of a biologic pharmaceutical. The main raw material cost around $1 million for each batch. We had to discard this material for each failing result. The material itself didn’t help. Although we had good reason to believe it was sterile, the material was a nutrient buffet. Think of it as a growth promoting broth ice cube. Spoiler alert- the raw material was not the root cause of this investigation. So what’s our problem statement? A new manufacturing site was performing engineering runs for a new product. Three engineering runs were performed with minimal bioburden recoveries. On the 4th run, bioburden Too Numerous To Count (TNTC, >250 CFU/mL) was recovered from the test sample. The growth was so heavy there were serious conversations about the result being documented as a single colony that happend to grow so big it covered the entire filter. The test sample represents the pooling of all individual units of raw material used for the batch. No excipients or water is added to the batch at this time. The site planned a total of 5 engineering runs. The 6th run was scheduled for commercial sale. Not only was the manufacturing process expensive - the product was a life saving drug in short supply. The pressure was on. Below is a diagram of the raw material processing. The diagram starts where the material is moved out of frozen storage to a non-classifed area. Each bottle is manually placed on a conveyor belt to be taken into an ISO-8 clean room. While on the conveyor belt, the bottles are rinsed with WFI, dried off with compressed air, cut open in the ISO 8 area, then a mechanical transfer arm pours the bottles into a thawing vessel. At this point the frozen broth is melted enough to be pumped through transfer piping to a pooling vessel. When the entire batch of broth (~7000 liters) is in the pooling vessel, the sample is collected. It’s important to note- This process is slow! To speed it up, a total of 4 thaw vessels are used for each batch. The system is even set up to transfer into 2 different pooling vessels as needed. A valve on the transfer piping determined which pooling vessel would receive the broth. Even when all 4 thaw vessels were transferring into a single pooling vessel, it took about 16-20 hours to pool all the broth. The picture below shows how the conveyor belts, pooling vessels, and transfer pipes are set up from a top-down perspective. The stick figures shown in room 1 highlight the amount of activity that occurs there. It is a high traffic area as people transfer product out of freezers, place bottles on the conveyor belt, and monitor the process in multiple ways. Room 2 was off limits while the mechanical transfer arms were operating, but personnel did need to enter the room a few times a run to perform maintenance. PThere were a few other factors we knew off the bat.

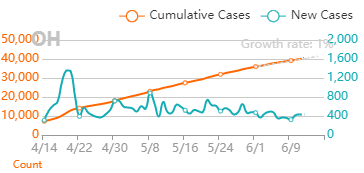

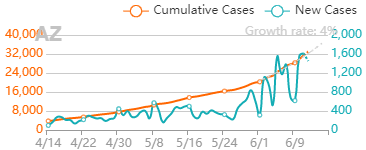

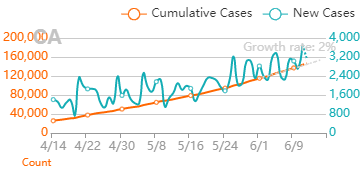

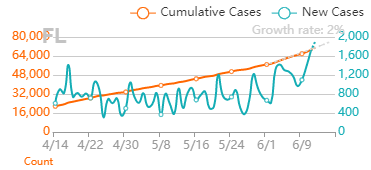

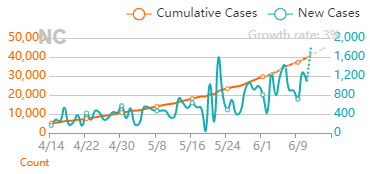

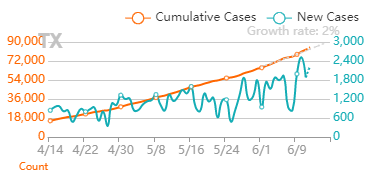

What are your thoughts? What would you look at for this investigation? In part 2, I'll describe the superstar team we put together, what we looked at, and why it was terribly wrong. Links to the following parts here: Part 2 Part 3 Part 4 Part 5 I feel like sharing some thoughts on Covid-19 case numbers.

You can hear the groans roll through the department when it happens. Missed sample collection! This happens way more frequently than any Quality Control group wants to admit. It’s somehow always an analyst’s fault for not getting the sample(s). Let’s look at one of those times…

…A supervisor hears a couple WFI ports weren’t sampled the previous week. Those sites require testing every week. That’s a deviation from procedure, so she opens an investigation. In this department, sampling plans are allocated to each analyst. The plans outline the ports they’re supposed to get each week and the days they’re usually collected. The plans are allocated a few weeks in advance. The missed samples were set for Friday collection. The analyst assigned to those samples was present that day but did not attempt to collect them. The supervisor talked to the analyst. The analyst confessed to forgetting those samples. The SOP stated there was no impact for this situation, so this investigation could be closed quickly. Sound familiar? 6Ms were reviewed for this event too: Measurement/Materials/Mother Nature/Machinery: These M’s did not play a role in this deviation and are not considered the root cause. Method: The method clearly states these samples must be collected on a weekly basis. The method has been followed correctly for years. Method is not the root cause Manpower: The analyst was aware of her sampling assignment for the week. She did not collect these samples. There was no barrier preventing her from collecting the samples. The analyst admitted she forgot to collect them. Manpower is the root cause. This is another low impact investigation where the analyst admitted to a mistake. Easy manpower root cause! We can talk to the analyst, document this “talking-to” as the corrective action, and close the event. Deviation closing metrics look great again! But I wouldn’t be bringing up this event if it were that simple. For years, these specific locations were routinely collected on Mondays. Management decided previous test results were only representative of the site on Mondays. Since data did not represent the site over the course of the week, they started a program to rotate days each sample was collected. This was a good change. But what a coincidence, these missed samples occurred the first week this program was implemented. Now let’s throw in something else- The analyst responsible for collecting the sites- She was sick on Monday that week. Monday, the day these samples were previously scheduled for sampling on her sample plan. Monday is also the start of the week, the day the supervisors officially rolled out the plan. When an analyst is out for the day, the supervisor assigns a back-up to collect samples on the analyst’s plan. These samples weren’t included because they were no longer collected on Mondays. By the end of the week, the analyst collected all samples she was assigned to except for the ones previously gotten on Mondays, the day she was absent. Let’s add another twist- the supervisor is responsible for reviewing samples that were collected each week. This allows the weekend shift to get samples not collected during the week. The supervisor assigned someone to this review and the assigned reviewer stated all samples were collected. The supervisor reported that to the weekend shift without confirming the data. I didn’t perform this investigation. I discovered the missing data that prompted the event, so I had a vested interest in it. But guess who did the write-up? The supervisor. I worked with her to flesh out the extenuating circumstances they should investigate. Those circumstances are hard to discuss in an investigation. How do you cite a non-controlled review process or sampling plan as a root cause? How do you implement a corrective action for something that management wants to keep outside of a formal procedure? After talking to me, the investigating Supervisor talked to a different Supervisor (the one responsible for tracking investigation metrics). During that discussion, the decision was made to remove any references to the sampling plan and data review. The root cause was attributed to a simple manpower error. Investigations are an opportunity to see process gaps and improve upon them. These improvements lead to fewer mistakes and higher quality products. In this case, the quality of the investigation was sacrificed to make metrics look good. An unfortunate employee was caught in the middle. This is a story about an analyst performing a biochemical test. She preps her samples, loads them into the test equipment, and enters sample info into the test software. Then she runs the test (she clicked "run" and went to lunch). After the test is complete, she confirms the assay is valid and results meet requirements. She cleans up her work area, notifies manufacturing they can continue processing, disposes the samples, and hands her paperwork in for review. Her supervisor notices a test parameter was entered incorrectly. The analyst used “0" for parameter X, Per the SOP, parameter X must be "100" for all samples. The software was able to re-calculate the results after the fact. The difference in results was barely a rounding error. But manufacturing was told to continue processing based on an inaccurate result, which was technically a deviation. The analyst and her supervisor discussed the error. The analyst immediately admitted to the mistake. The method clearly said to use 100 for parameter X. The event had no impact on the product, so the investigation could close quickly, but we wanted to use the 6Ms to show our due diligence. Here's how the 6Ms were considered (very abridged versions): Measurements: The parameter was entered incorrectly, and the parameter is used for the test measurement. But that's the problem statement. Materials: All materials were used correctly for this test. Mother Nature/Milieu: The environment did not contribute to this issue. Machinery: The machinery was calibrated and functioning correctly at the time. Method: The method clearly states parameter X must be set to 100. Those 5Ms didn't need much consideration for the root cause. Those factors had all been in place for years with no previous deviations like this. Now, we get to... Manpower: The analyst did not enter Parameter X correctly. There was no physical or electronic barrier stopping her from entering it. She completed the test with the correct value entered multiple times before. Even with the 6Ms It’s obvious the analyst made a mistake. We could talk to the analyst, document a “talking-to” as the corrective action, and close the event. Metrics for closing deviations in less than 30 days would continue to look great. The root cause must be manpower, right? What idiot wastes more time with this investigation? Me. I'm that idiot. I drove the area manager nuts with this. I talked to that analyst about how the parameter is normally entered in the system. The answer was interesting – she doesn’t normally enter the parameter. Analysts are taught to copy and paste information from a previous line when performing new tests. The analyst only needs to change test specific info. This saves the company lots of time over dozens of tests each day. As parameter X never changes, analysts never do anything to that field. This analyst was performing a single test that session, so she created a new blank line to enter sample information. The analyst felt it easier for a single sample, but this is the more compliant practice. The blank line defaults parameter X to 0. As she never adjusted this value in her training or in previous tests (and because this was a time sensitive sample), she didn’t think to look at it. By investigating this issue just a little bit more, we uncover a lot:

All we had to do was re-program the default value to 100, and we would never see the issue again. If you do the routine 6M investigation you naturally blame manpower. This correction is NEVER discovered or implemented with that conclusion. So what should we call the root cause? I definitely wouldn't consider it to be the analyst that ran the test. But It really doesn't matter. Check out my video on the futility of narrowing a root cause to a 1-2 word phrase here: Another thing worth noting here- Around the time this event occurred, the company was rolling out a new policy. If you were the “manpower” root cause for an event, it would have a direct impact on your bonus and performance review. This policy could only impact front line, entry level employees that handle GMP tasks. In a very short sighted way, the company would save money on bonuses by jumping to blaming people. How would you feel working under that managment? I know I way over-simplified the rationale here, but optics like this matter.

The 6Ms are terrible for these types of investigations. You really need to walk through a process with the people involved to figure out what happened, even for ones that seem trivial. Please, if you’re doing an investigation, challenge yourself to find the actual root cause. |